In a previous article, I have already alluded to the fact that I think that the confusion around the three words (model, schema, and metadata) is really important to clarify and get straight for our industry. That confusion has a historical reason: in the past 4 decades, when Relational DBMS’ ruled the earth, there was no real need to be specific around these three different concepts: the model and the schema were basically intertwined. But that has changed with NOSQL, and today we do really have to be more specific because we have more variability – we can have more or less modeling, and more or less schema, as we choose. Tools like Hackolade and its polyglot data modeling have already made that very clear as well.

The main reason why I think being specific around this is important, is because it also allows us to be specific about the underlying concerns that speak to these words.

The concerns are different:

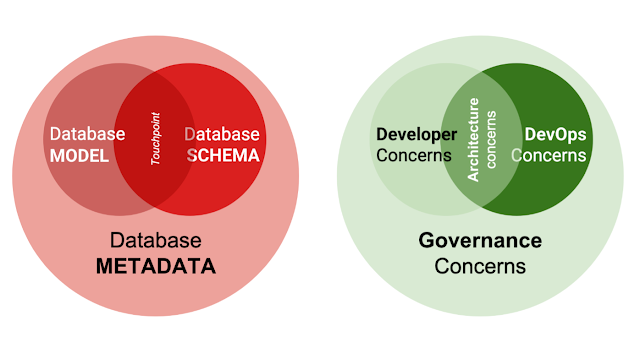

- When we talk about the (conceptual, logical and even physical data) model, we are actually talking about the concerns of the software development team that is creating the software, from scratch. This team is responsible for understanding and addressing the operational problems of their end users with specific software-based solutions. They need to properly UNDERSTAND these problems, and that means that they need to understand the data used in addressing these problems, in constant communication and interaction with the business users. The model, therefore, is a developer concern - and is of value mostly to that community and its business customers.

- When we talk about the schema (the output of the physical data model), we are talking about the concerns of the IT administration team that is tasked with running and operating the systems that the software solution will actually operate from. Of course, the developers will need to interact and work with the schema – but they will do so in support of the rules that the schema applies so that the system remains manageable, supportable, and overall, consistently reliable. They make sure that the hardware, software, security, management environment, monitoring environment, and so on are all properly configured - and that means that certain rules will need to be defined and enforced. For a database management system, this is what a schema does: it formalizes the rules of the integrity and consistency of the data that is being managed by the system, in a contractual, machine readable agreement. All data that is stored in this system, will need to comply with these rules. In a traditional model, we have “database administrators” taking care of this task - but in the modern, agile world, the “developer operations” (aka devops) team will take care of this function.

- When we talk about the metadata, we are talking about the concerns of the data governance team that is tasked with making sure that the data policies and procedures are complying with the rules and regulations that the company has to align itself to. That means that there are going to be certain types of formalities that relate to the quality assurance, documentation, approval processes, reporting and other capabilities that will allow the regulators to verify and validate the procedures of the organization's data-related processes. Interestingly enough, the data governance team will typically also close the loop with the business users and in part, make the metadata their concern, as the data catalogs are fed back to business users for review and self-service analysis.

In many ways, we could therefore align the Venn-diagram for the concerns in very much the same way as we did with the different concepts (model/schema/metadata) that we outlined in an earlier article:

I believe that this is actually really interesting and important: the 3 confusing words that our industry has been struggling with, actually highlight 3 different types of concerns that are related to the Data Modeling industry and profession. And when you think about it, these concerns also point us to different types of value.

3 concerns point to 3 different sources of value for data modeling

This brings me to my last point: the value of data modeling in a modern software development environment. In any kind of market where there are valid and real concerns, there is value in addressing or removing these concerns. Providing solutions to these problems is the essential premise beneath any kind of value creation, and that’s no different for the data modeling software industry.

The Hackolade team has actually leveraged some really interesting research and that was done by a variety of different players to summarize this value - you can read up on it on this page of the Hackolade website, and even take the ROI calculator for a spin if you want. The principle is simple:

ROI % = (Benefit due to Data Modeling - Cost of Investment) / Cost of Investment X 100

All you need to do is fill in some numbers!

Now here’s the thing: filling in the numbers is always going to be super difficult, and is not always going to be super productive. When I worked on a similar type of formalised value framework for Neo4j a few years ago, we actually took a different approach: we specifically stressed that we were not trying to be 200% objective - but rather were trying to figure out a way to help users articulate the value of the (graph) solution explicitly rather than leaving it implicit for the passersby to take a wild guess at. The value will of course ideally be quantifiable, but that does not mean that the quantifiable metrics are supposed to be super scientific or even accurate. They are supposed to be indicative of how one could go about the organizational conversation that justifies the time and resources that you would pay for sound data modeling - and the specifics can be detailed and argued about at a later date.

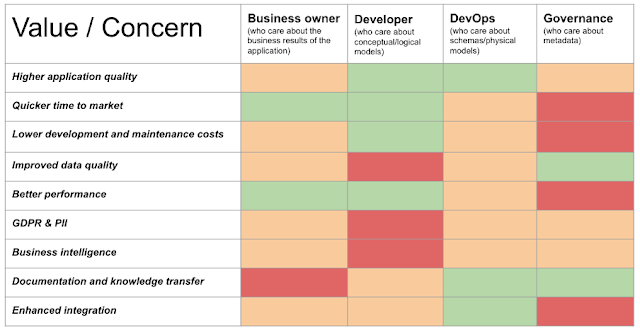

So here’s an interesting exercise: if I would have to take some broad strokes and guess at what concern could be translated into what kind of valuable benefits for the organisation, I would probable sketch out something like this:

The table attempts to articulate if the 4 different concerns (business/developer/devops/ governance) are to be served by the different data modeling value sources. Green means that the value would benefit the concern highly, orange means an average contribution, and red means a low contribution. We can of course make some obvious conclusions based on this subjective mapping exercise, specifically with regards to the main users and target audiences of modeling solutions. Based on the simple vocabulary (the three words!) that resonates most with the persona that is interested in data modeling, we can infer some basic levels of interest that point us towards a more likely match with that target audience. Clearly, this is something that we can then translate into a commercial strategy and a set of targeted campaigns that will not only propel the company, but also help the industry in general.

That wraps up some of my current thinking while working with the team at Hackolade. No doubt there is more to be said about this - but for now I think we have enough food for thought :) …

Please let me know if you have any ideas that you would like to share with me, or topics that you would like to discuss.

All the best

Rik

No comments:

Post a Comment