Part of the confusion in the terminology stems from the fact, I believe, that in traditional relational database management systems, your physical model and your schema were tied at the hip. It would be extremely weird to have one without the other. In fact, in relational data modeling, your schema would literally be the output of your physical modeling and would therefore be a different representation of the same thing. The physical model would be human readable, and the schema would be machine readable. That all changed with NOSQL database management systems (document databases like MongoDB or graph databases like Neo4j), where you could have “the data be the model”, and where the enforcement of the model would be completely optional and usually not even considered before one would take the system into production.

These databases would be called “schemaless” for this reason. But the reality was even worse: in many cases people thought that they could not just do without a schema – but that they actually could do without the model. This, I believe, has been a grave mistake in our industry – and has led to a host of issues in the adoption of NOSQL. Let’s explore why that is.

Schema vs. Model: the underlying conversation between IT & Business

In many ways, you could think of the different types of (conceptual>logical> physical) model(s) as the representation of the conversation between Business and IT: as you refine the concepts and translate them into logical and physical designs, the IT professionals will be learning more about the business ideas required for the application in an interactive and collaborative conversation. This conversation has been somewhat of a struggle for decades I remember my friend Peter Hinssen writing a great book about it in 2009 - and the topic is still very much top of mind!Similar to the fact that models represent a conversation between business and IT, you could argue the degree to which we have more or less schema in our NOSQL data stores is also representative of the interaction between IT and its systems. In some cases, our technical staff will allow for the data stores to have very little schema enforcement (eg. at the start of an agile development project, when we do not know many details of the domain yet), and in some cases they will actually be super strict about schema enforcement (eg. when a mission critical application is about to go into production).

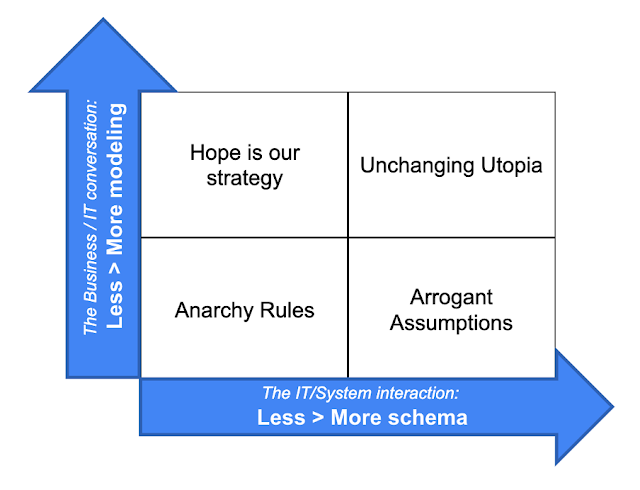

Let’s combine these two ideas: we can either have more or less modeling – and we can have more or less schema. If we think of this as a set of four quadrants, we can see four possibilities:

- We don’t model, and we don’t enforce schema: this means that anarchy rules.

- We model, but we don’t enforce schema: this means that that data integrity and consistency challenges will automagically appear. Hope is our strategy.

- We don’t model, but we do enforce schema: which means that we skip the entire modeling conversation, don’t think of the schema as the logical output of the modeling conversation and just go straight to the schema development, assuming that we already know everything about the domain, don’t need a thorough conversation with the users, and will enforce some form of formalized contract (the schema) at our will and choice. Pretty arrogant, no?

- We model, and we enforce schema: we know that from our legacy in 40 years of building relational systems. There are downsides to this approach as well of course – specifically around maintaining agility in our development processes.

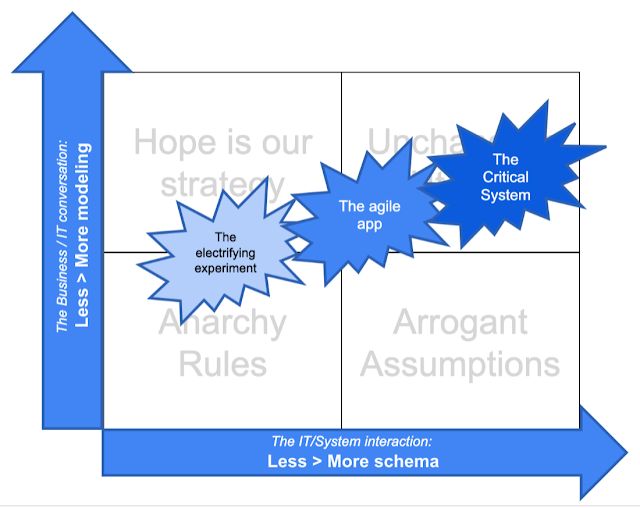

Let’s explore that a little in this graphic:

In the graphic I have positioned three types of software systems, in very broad strokes:

- The electrifying experiment: this is one of those software systems that we have started to see more often, as the cost of software prototyping came down. Through the use of frameworks, libraries, APIs and many other reusable software components, we can in fact deliver an experimental system much more easily. In that case, it would not be necessary or wise to implement too much schema, or to spend too much time modeling out the entire, largely unknown, business model.

- The agile app: this is one step up from the experiment. We have seen quite a few software systems that evolve over time, that start small and become big, that change in breadth and depth significantly over their lifespan. In these types of project, we probably want more modeling and more schema – but we don’t want to go overboard, yet.

- The Critical System: if you are building a mission critical system powering nuclear reactors or large life-or-death flight control systems, you want a bit more due diligence in the process – and a bit more rigidity in the schema. That’s just because the stakes are high – the system cannot go wrong.

Hope that this article was a useful perspective on how data modeling and schema enforcement represent interesting conversations in our industry. If you have any thoughts on this, I would love to hear from you! Please reach out.

All the best

Rik

PS: also wanted to link in this report “Data Modeling to Support End-to-End Data Architectures - Published 8 February 2019”. In it, Gartner says: “Schema-on-read does not mean, “I don’t have to think about schemas during the design phase.”” and “Schema-on-read should not mean “schema by amateurs.”"

No comments:

Post a Comment