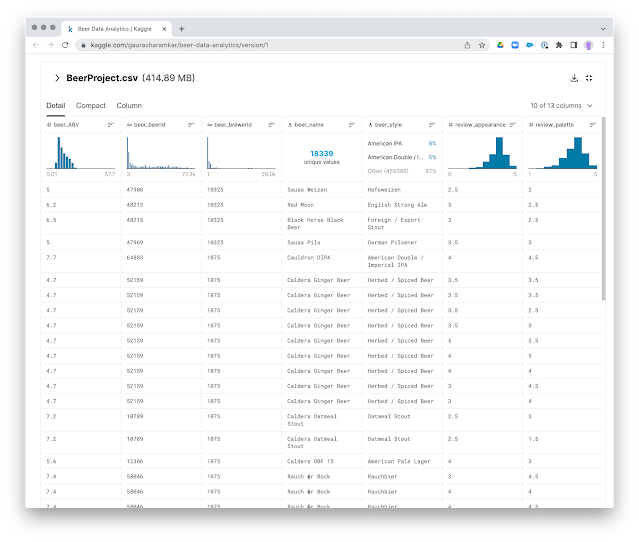

First: find a dataset

So I was ready to take that to the importer.

Preparing the importer: modeling and mapping

Once unzipped, the .csv is a good 414MB large. Not huge - but not that small either. So when I loaded it into the importer, I was pleasantly surprised at the fact that I almost immediately got the structure of the file to appear on the left hand tab of the interface. Now all I needed to do would be to draw a little graph model and map the fields onto the model.

For nodes this is pretty straightforward: I just select the .csv files that belong together and insert them into the model structure that I drew out. Important here is that you need to make sure that every node has an "identifier" property - so that the importer knows what to create/merge at insert time. |

Once this is all done, we are ready to actually running the import. Note that I have not typed a SINGLE LINE OF CODE yet. No cypher. No apoc. No batching of transactions. No special configurations on the database. Nothing at all.

Running the import

Then we are ready to "push the blue button". Knowing how finnicky these types of import procedures were in the past - I took a deep breath and hoped for the best.

But: I am VERY happy to report that it all went super smoothly. A good 5 minutes later, the data importer happily reported the completion of the import:And it even allowed me to take a look at the internals and how it performed the different steps of the task. Here's what happened when it was importing the Beer nodes:

And here you can see what happened when it imported the (Brewer)-[:BREWS]->(Beer) relationships:

So waw that was really surprisingly easy. Let's see if we can confirm the results in the actual database.

Viewing the results in the Neo4j Browser and Neo4j Bloom

Looking at the data in the Neo4j browser immediately seemed to confirm the succesful import. Here are some nodes stats:So we are definitely looking at the right type of volumes in terms of imported data.

The schema also looked correct:

So then we are ready to start exploring. Maybe, just maybe, I can find some more tasty tidbits to savour over the weekend. Browser and Bloom seem to be making plenty of nice suggestions.

No comments:

Post a Comment